Introduction

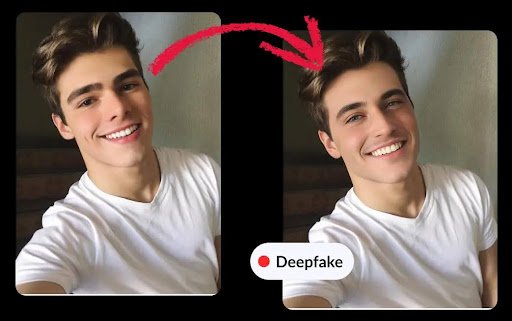

In recent years, artificial intelligence has made remarkable strides, particularly in the field of synthetic media. Deepfakes, hyper-realistic manipulated videos, images, and audio—have become increasingly accessible and convincing. While this technology offers creative and entertainment opportunities, it also presents serious risks, including misinformation, identity fraud, and reputational damage.

As a result, deepfake detection technology has emerged as a critical tool in maintaining digital trust. This article explores how deepfake detection works, why it matters, and how organizations can leverage it to protect their digital ecosystems.

Understanding Deepfakes and Their Growing Threat

Deepfakes are created using advanced machine learning techniques such as generative adversarial networks (GANs). These systems can produce highly convincing fake content by learning patterns from real data. The consequences of deepfakes extend far beyond harmless entertainment—they can be used in political manipulation, financial scams, and cybercrime.

To counter this threat, many organizations are integrating solutions like a deepfake detection API into their platforms, enabling real-time identification of manipulated media. This proactive approach helps prevent the spread of harmful content and builds trust among users and stakeholders.

How Deepfake Detection Technology Works

Deepfake detection systems rely on a combination of artificial intelligence, computer vision, and signal processing techniques. These tools analyze inconsistencies in media that are often invisible to the human eye.

Key detection methods include:

- Facial irregularities: Detecting unnatural movements, blinking patterns, or mismatched facial expressions

- Audio analysis: Identifying inconsistencies in tone, pitch, or speech patterns

- Metadata inspection: Examining file history and compression artifacts

- Biometric cues: Analyzing physiological signals such as heartbeat patterns in videos

By combining these techniques, detection systems can accurately flag suspicious content, even as deepfake generation methods continue to evolve.

Applications Across Industries

Deepfake detection technology is no longer limited to research labs—it is actively being deployed across various industries:

- Media and journalism: News organizations use detection tools to verify the authenticity of user-generated content before publication

- Finance: Banks and fintech companies use detection systems to prevent identity fraud during remote onboarding

- Government and defense: Agencies rely on detection tools to counter misinformation and protect national security

- Social media platforms: Platforms implement detection algorithms to identify and remove harmful deepfake content

These applications highlight the growing importance of detection technology in maintaining integrity across digital channels.

Challenges in Detecting Deepfakes

Despite significant advancements, deepfake detection is not without challenges. As generation techniques become more sophisticated, detection tools must constantly adapt.

Some key challenges include:

- Arms race between creation and detection: As AI improves, deepfakes become harder to detect

- Lack of standardization: No universal benchmarks exist for evaluating detection accuracy

- False positives and negatives: Misidentifying content can lead to reputational or operational issues

- Scalability: Processing large volumes of media in real time requires significant computational resources

Addressing these challenges requires continuous research, collaboration, and innovation in AI technologies.

The Role of AI Ethics and Regulation

The rise of deepfakes has sparked global conversations around AI ethics and regulation. Governments and organizations are working to establish guidelines that promote responsible use of synthetic media.

Key considerations include:

- Transparency: Clearly labeling AI-generated content

- Accountability: Holding creators and distributors responsible for misuse

- Privacy protection: Preventing unauthorized use of personal data

- Collaboration: Encouraging partnerships between tech companies, regulators, and researchers

Ethical frameworks and regulatory policies play a crucial role in ensuring that deepfake technology is used responsibly while minimizing its risks.

Future Trends in Deepfake Detection

The future of deepfake detection lies in innovation and integration. Emerging trends include:

- Real-time detection: Faster systems capable of analyzing live video streams

- Blockchain verification: Using decentralized systems to verify media authenticity

- Multimodal analysis: Combining audio, video, and text analysis for higher accuracy

- AI-driven automation: Reducing human intervention through advanced machine learning models

As these technologies evolve, deepfake detection will become more robust, accessible, and essential for digital security.

Conclusion

Deepfake technology represents both an opportunity and a challenge in the digital age. While it enables creative expression, it also threatens trust, security, and authenticity. Organizations must take proactive steps to address these risks by adopting advanced detection solutions.

Integrating tools such as a deepfake detection API into digital platforms can significantly enhance the ability to identify and mitigate manipulated content. By investing in detection technology, promoting ethical AI practices, and fostering collaboration, businesses and institutions can safeguard their digital environments and maintain public trust in an increasingly AI-driven world.